线性可分 SVM

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import SVC # "Support vector classifier"

# 定义函数plot_svc_decision_function用于绘制分割超平面和其两侧的辅助超平面

def plot_svc_decision_function(model, ax=None, plot_support=True):

"""Plot the decision function for a 2D SVC"""

if ax is None:

ax = plt.gca()

xlim = ax.get_xlim()

ylim = ax.get_ylim()

# 创建网格用于评价模型

x = np.linspace(xlim[0], xlim[1], 30)

y = np.linspace(ylim[0], ylim[1], 30)

Y, X = np.meshgrid(y, x)

xy = np.vstack([X.ravel(), Y.ravel()]).T

P = model.decision_function(xy).reshape(X.shape)

#绘制超平面

ax.contour(X, Y, P, colors='k',

levels=[-1, 0, 1], alpha=0.5,

linestyles=['--', '-', '--'])

#标识出支持向量

if plot_support:

ax.scatter(model.support_vectors_[:, 0], model.support_vectors_[:, 1], s=300, linewidth=1, edgecolors='blue', facecolors='none');

ax.set_xlim(xlim)

ax.set_ylim(ylim)

# 用make_blobs生成样本数据

from sklearn.datasets.samples_generator import make_blobs

X, y = make_blobs(n_samples=50, centers=2, random_state=0, cluster_std=0.60)

# 用线性核函数的SVM来对样本进行分类

model = SVC(kernel='linear')

model.fit(X, y)

# 将样本数据绘制在直角坐标中

plt.scatter(X[:, 0], X[:, 1], c=y, s=50, cmap='autumn')

# 在直角坐标中绘制出分割超平面、辅助超平面和支持向量

plot_svc_decision_function(model)

plt.show()

结果

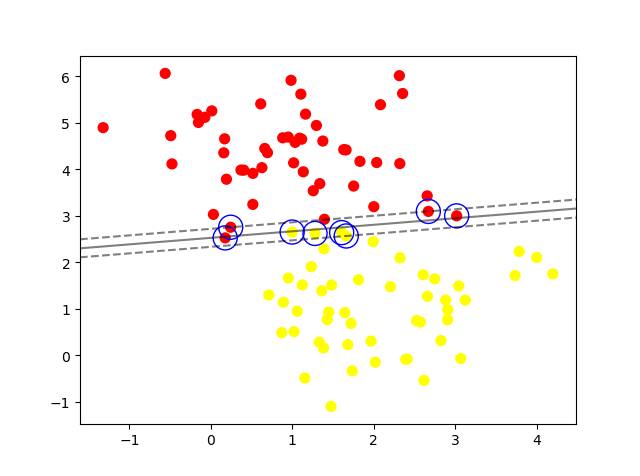

线性 SVM

不同类型样本发生了重叠的情况

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import SVC # "Support vector classifier"

# 定义函数plot_svc_decision_function用于绘制分割超平面和其两侧的辅助超平面

def plot_svc_decision_function(model, ax=None, plot_support=True):

"""Plot the decision function for a 2D SVC"""

if ax is None:

ax = plt.gca()

xlim = ax.get_xlim()

ylim = ax.get_ylim()

# 创建网格用于评价模型

x = np.linspace(xlim[0], xlim[1], 30)

y = np.linspace(ylim[0], ylim[1], 30)

Y, X = np.meshgrid(y, x)

xy = np.vstack([X.ravel(), Y.ravel()]).T

P = model.decision_function(xy).reshape(X.shape)

#绘制超平面

ax.contour(X, Y, P, colors='k',

levels=[-1, 0, 1], alpha=0.5,

linestyles=['--', '-', '--'])

#标识出支持向量

if plot_support:

ax.scatter(model.support_vectors_[:, 0], model.support_vectors_[:, 1], s=300, linewidth=1, edgecolors='blue', facecolors='none');

ax.set_xlim(xlim)

ax.set_ylim(ylim)

# 用make_blobs生成样本数据

from sklearn.datasets.samples_generator import make_blobs

# X, y = make_blobs(n_samples=50, centers=2, random_state=0, cluster_std=0.60)

X, y = make_blobs(n_samples=100, centers=2, random_state=0, cluster_std=0.9)

# 用线性核函数的SVM来对样本进行分类

model = SVC(kernel='linear')

model.fit(X, y)

# 将样本数据绘制在直角坐标中

plt.scatter(X[:, 0], X[:, 1], c=y, s=50, cmap='autumn')

# 在直角坐标中绘制出分割超平面、辅助超平面和支持向量

plot_svc_decision_function(model)

plt.show()

结果

提高惩罚系数C

SVC 类,有一个 C 参数,对应的是错误项(Error Term)的惩罚系数。这个系数设置得越高,容错性也就越小,分隔空间的硬度也就越强。

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import SVC # "Support vector classifier"

# 定义函数plot_svc_decision_function用于绘制分割超平面和其两侧的辅助超平面

def plot_svc_decision_function(model, ax=None, plot_support=True):

"""Plot the decision function for a 2D SVC"""

if ax is None:

ax = plt.gca()

xlim = ax.get_xlim()

ylim = ax.get_ylim()

# 创建网格用于评价模型

x = np.linspace(xlim[0], xlim[1], 30)

y = np.linspace(ylim[0], ylim[1], 30)

Y, X = np.meshgrid(y, x)

xy = np.vstack([X.ravel(), Y.ravel()]).T

P = model.decision_function(xy).reshape(X.shape)

#绘制超平面

ax.contour(X, Y, P, colors='k',

levels=[-1, 0, 1], alpha=0.5,

linestyles=['--', '-', '--'])

#标识出支持向量

if plot_support:

ax.scatter(model.support_vectors_[:, 0], model.support_vectors_[:, 1], s=300, linewidth=1, edgecolors='blue', facecolors='none');

ax.set_xlim(xlim)

ax.set_ylim(ylim)

# 用make_blobs生成样本数据

from sklearn.datasets.samples_generator import make_blobs

# X, y = make_blobs(n_samples=50, centers=2, random_state=0, cluster_std=0.60)

X, y = make_blobs(n_samples=100, centers=2, random_state=0, cluster_std=0.9)

# 用线性核函数的SVM来对样本进行分类

# model = SVC(kernel='linear')

model = SVC(kernel='linear', C=100.0)

model.fit(X, y)

# 将样本数据绘制在直角坐标中

plt.scatter(X[:, 0], X[:, 1], c=y, s=50, cmap='autumn')

# 在直角坐标中绘制出分割超平面、辅助超平面和支持向量

plot_svc_decision_function(model)

plt.show()

结果

完全线性不可分的数据

在完全线性不可分的数据中可以用RBF核在高维度空间分割样本

import numpy as np

import matplotlib.pyplot as plt

from sklearn.svm import SVC # "Support vector classifier"

# 定义函数plot_svc_decision_function用于绘制分割超平面和其两侧的辅助超平面

def plot_svc_decision_function(model, ax=None, plot_support=True):

"""Plot the decision function for a 2D SVC"""

if ax is None:

ax = plt.gca()

xlim = ax.get_xlim()

ylim = ax.get_ylim()

# 创建网格用于评价模型

x = np.linspace(xlim[0], xlim[1], 30)

y = np.linspace(ylim[0], ylim[1], 30)

Y, X = np.meshgrid(y, x)

xy = np.vstack([X.ravel(), Y.ravel()]).T

P = model.decision_function(xy).reshape(X.shape)

#绘制超平面

ax.contour(X, Y, P, colors='k',

levels=[-1, 0, 1], alpha=0.5,

linestyles=['--', '-', '--'])

#标识出支持向量

if plot_support:

ax.scatter(model.support_vectors_[:, 0], model.support_vectors_[:, 1], s=300, linewidth=1, edgecolors='blue', facecolors='none');

ax.set_xlim(xlim)

ax.set_ylim(ylim)

# 用make_blobs生成样本数据

# from sklearn.datasets.samples_generator import make_blobs

# X, y = make_blobs(n_samples=50, centers=2, random_state=0, cluster_std=0.60)

# X, y = make_blobs(n_samples=100, centers=2, random_state=0, cluster_std=0.9)

# 用make_circles生成样本数据

from sklearn.datasets.samples_generator import make_circles

X, y = make_circles(100, factor=.1, noise=.1)

from mpl_toolkits import mplot3d

def plot_3D(elev=30, azim=30, X=None, y=None):

ax = plt.subplot(projection='3d')

r = np.exp(-(X ** 2).sum(1))

ax.scatter3D(X[:, 0], X[:, 1], r, c=y, s=50, cmap='autumn')

ax.view_init(elev=elev, azim=azim)

ax.set_xlabel('x')

ax.set_ylabel('y')

ax.set_zlabel('r')

# 用线性核函数的SVM来对样本进行分类

# model = SVC(kernel='linear')

# model = SVC(kernel='linear', C=10.0)

# 用 RBF 核对样本进行分类 再把惩罚系数再调高一百倍

model = SVC(kernel='rbf', C=100)

model.fit(X, y)

# 将样本数据绘制在直角坐标中

# plt.scatter(X[:, 0], X[:, 1], c=y, s=50, cmap='autumn')

# 将样本数据绘制在3维坐标中

plot_3D(X=X, y=y)

# 在直角坐标中绘制出分割超平面、辅助超平面和支持向量

plot_svc_decision_function(model)

plt.show()

结果

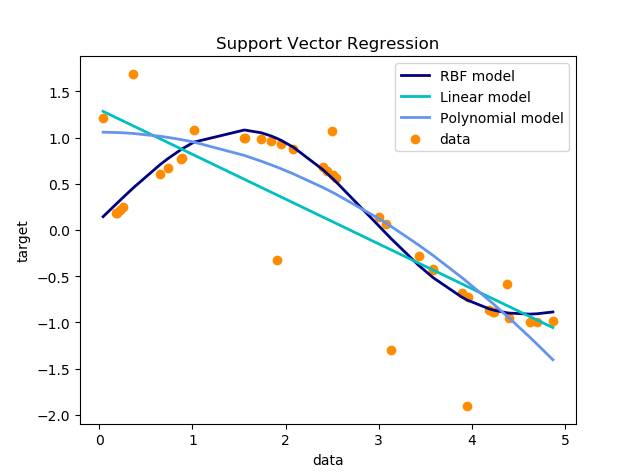

SVR

import numpy as np

from sklearn.svm import SVR

import matplotlib.pyplot as plt

# 生成样本数据

X = np.sort(5 * np.random.rand(40, 1), axis=0)

# 线性

# y = np.ravel(2*X + 3)

# 曲线

# y = np.polyval([2,3,5,2], X).ravel()

y = np.sin(X).ravel()

# 加入部分噪音

y[::5] += 3 * (0.5 - np.random.rand(8))

# 调用模型

svr_rbf = SVR(kernel='rbf', C=1e3, gamma=0.1)

svr_lin = SVR(kernel='linear', C=1e3)

svr_poly = SVR(kernel='poly', C=1e3, degree=2)

y_rbf = svr_rbf.fit(X, y).predict(X)

y_lin = svr_lin.fit(X, y).predict(X)

y_poly = svr_poly.fit(X, y).predict(X)

# 可视化结果

lw = 2

plt.scatter(X, y, color='darkorange', label='data')

plt.plot(X, y_rbf, color='navy', lw=lw, label='RBF model')

plt.plot(X, y_lin, color='c', lw=lw, label='Linear model')

plt.plot(X, y_poly, color='cornflowerblue', lw=lw, label='Polynomial model')

plt.xlabel('data')

plt.ylabel('target')

plt.title('Support Vector Regression')

plt.legend()

plt.show()

结果